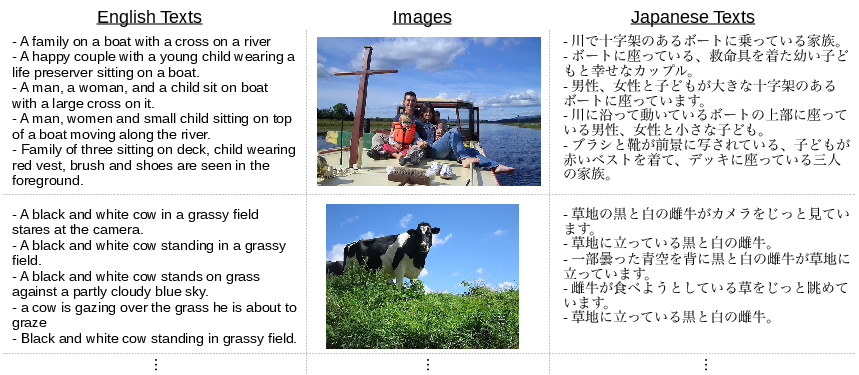

Japanese translation for UIUC Pascal Sentence Dataset

About the dataset

The UIUC Pascal Sentence Dataset (Rashtchian et al., 2010) contains 1000 images, each of which is annotated with five English sentences describing its content. This dataset was originally created for the study of sentence generation from images, which is one of the current hot topics in computer vision.

In our paper "Image-mediated learning for zero-shot cross-lingual document retrieval" (EMNLP 2015), to establish a new benchmark dataset for image-mediated Cross-Lingual Document Retrieval (CLDR), we added a Japanese translation for each English sentence. We release this Japanese dataset in this page.

This full dataset consists of triplets of English text, images and Japanese text. We also expect this dataset can be used in other tasks as well.

Download

Japanese Translation

Japanese Translation (divided into words by morphological analysis)

Japanese translation devided into words by space using the MeCab (Kudo et al., 2004) library, a morphological analysis tool.

Image Features (CaffeNet, GoogLeNet, VGGNet, FisherVector)

Scraping tool for original dataset

Usage

You can download Japanese dataset from the above links. Although these files do not contain the original dataset (images and English descriptions), python scripts are also avaliable to download original dataset. For more information, read "README.txt" in each zip file.

Paper

Image-Mediated Learning for Zero-Shot Cross-Lingual Document Retrieval

Reference

Citation

If you use this dataset for your publications, please cite:

Ruka Funaki, Hideki Nakayama,

"Image-mediated learning for zero-shot cross-lingual document retrieval",

Empirical Methods in Natural Language Processing (EMNLP), 2015.

Bibtex

@InProceedings{funaki2015,

author = "Ruka Funaki and Hideki Nakayama",

title = "Image-mediated learning for zero-shot cross-lingual document retrieval",

booktitle = "Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing (EMNLP)",

pages = "585-590",

year = "2015"

}